File information

Last updated

Original upload

Created by

whateverUploaded by

giamelVirus scan

Safe to use

Tags for this mod

Current section

About this mod

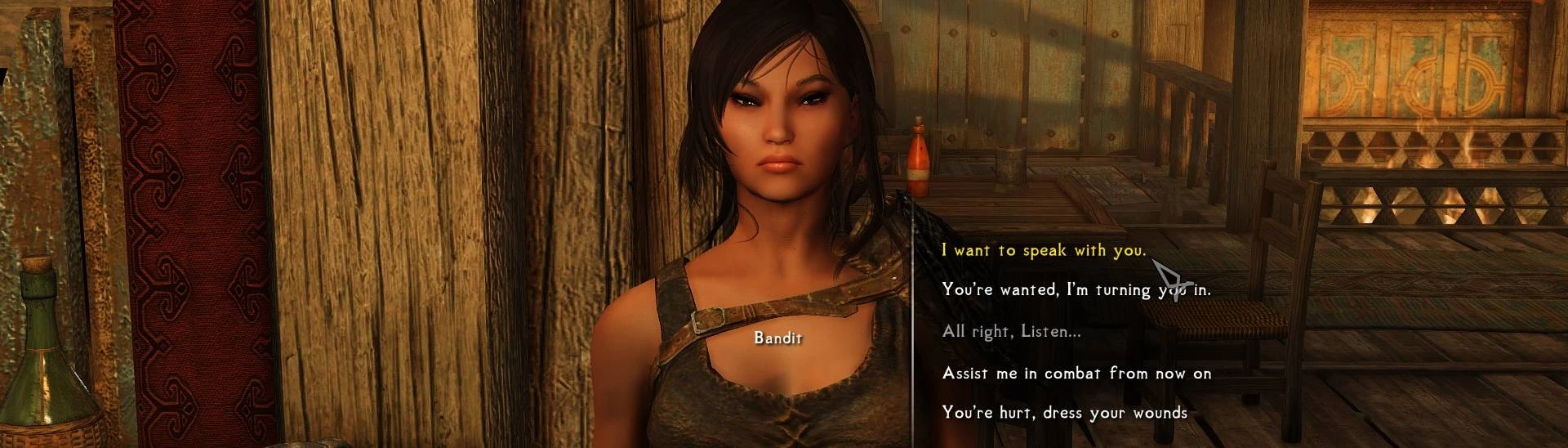

Simply allows for a Mantella interaction via dialogue menu

- Requirements

-

Nexus requirements

Mod name Notes Mantella - Bring NPCs to Life with AI and it's requirments - Permissions and credits

-

Credits and distribution permission

- Other user's assets This author has not specified whether they have used assets from other authors or not

- Upload permission You are not allowed to upload this file to other sites under any circumstances

- Modification permission You must get permission from me before you are allowed to modify my files to improve it

- Conversion permission You are not allowed to convert this file to work on other games under any circumstances

- Asset use permission You must get permission from me before you are allowed to use any of the assets in this file

- Asset use permission in mods/files that are being sold You are not allowed to use assets from this file in any mods/files that are being sold, for money, on Steam Workshop or other platforms

- Asset use permission in mods/files that earn donation points You are allowed to earn Donation Points for your mods if they use my assets

Author notes

This author has not provided any additional notes regarding file permissions

File credits

This author has not credited anyone else in this file

Donation Points system

This mod is opted-in to receive Donation Points

If you are like me and don't want to use your mic in fear of spitting Doritos all over the place or cast spells because spells are best cast by girls. here you go.

This simply allows for a Mantella interaction via dialogue menu.

"What is Mantella?" You ask. like I did two days ago. Well, It allows you to converse using Artificial Intelligence with any NPC in Skyrim which use a vanilla voice and with some extra work, even custom voices.

Benefits --> (if setup w/ textgen)

I know 90% of you have sensitive clicking fingers but show ArtFromTheMachine some love and endorse when over there for doing such incredible work.

Here is my Mantella config.ini

This simply allows for a Mantella interaction via dialogue menu.

"What is Mantella?" You ask. like I did two days ago. Well, It allows you to converse using Artificial Intelligence with any NPC in Skyrim which use a vanilla voice and with some extra work, even custom voices.

Benefits --> (if setup w/ textgen)

- Completely free

- Completely offline

- Use of an uncensored LLM *he whispers softly*

I know 90% of you have sensitive clicking fingers but show ArtFromTheMachine some love and endorse when over there for doing such incredible work.

Here is my Mantella config.ini

Spoiler:

[Paths]

; Directories used by Mantella

; skyrim_folder:

; If you are using a Wabbajack modlist, Mod Organizer 2 may be storing your Skyrim folder in MO2\overwrite\Root

; If that is the case, try setting this path as your skyrim_folder if pointing to your actual Skyrim folder doesn't work

; If this path is incorrect, casting a spell on an NPC will will only end a conversation

; default = C:\Games\Steam\steamapps\common\Skyrim Special Edition

skyrim_folder = H:\SkyrimSE

; xvasynth_folder:

; The folder you have xVASynth downloaded to (the folder that contains xVASynth.exe)

; default = C:\Games\Steam\steamapps\common\xVASynth

xvasynth_folder = H:\xVASynth_3.0

; mod_folder:

; This is the path to the Mantella spell

; If you are using Mod Organizer 2, this path can be found by right-clicking the Mantella mod in your mod list

; and selecting "Open in Explorer"

; If you are using Vortex, this path needs to be set to your Skyrim\Data folder

; eg C:\Games\Steam\steamapps\common\Skyrim Special Edition\Data

; If this path is incorrect, NPCs will say the same voiceline on repeat

; default = C:\Modding\MO2\mods\Mantella

mod_folder = H:\MO2\mods\Mantella_Spell-98631-0-7-1693656376

[Language]

; language:

; The language used by ChatGPT, xVASynth, and Whisper

; Options: en, ar, da, de, el, es, fi, fr, hu, it, ko, nl, pl, pt, ro, ru, sv, sw, uk, ha, tr, vi, yo

language = en

; end_conversation_keyword:

; The keyword Mantella will listen out for to end the conversation (you can also end conversations by re-casting the Mantella spell)

end_conversation_keyword = Goodbye

; goodbye_npc_response

; The response the NPC gives at the end of the conversation

goodbye_npc_response = Safe travels

; collecting_thoughts_npc_response

; The response the NPC gives when they need to summarise the conversation because the maximum token count has been reached

collecting_thoughts_npc_response = I need to gather my thoughts for a moment

[Microphone]

; microphone_enabled

; Whether to use microphone input (1) or text input (0)

; Options: 0, 1

microphone_enabled = 0

; model_size:

; The size of the Whisper model used. Some languages require larger models. The base.en model works well enough for English

; See here for a comparison of languages and their Whisper performance:

; https://github.com/openai/whisper#available-models-and-languages

; Options: tiny, tiny.en, base, base.en, small, small.en, medium, medium.en, large-v1, or large-v2

model_size = base

; process_device:

; Whether to run Whisper on your CPU or NVIDIA GPU (with CUDA installed)

; Options: cpu, cuda

process_device = cpu

; audio_threshold:

; Controls how much background noise is filtered out

; If the mic is not picking up speech, try lowering this value

; If the mic is picking up too much background noise, try increasing this value

; Set this value to auto to let the script decide (only recommended if you are trying to fix mic issues, otherwise this option can be inconsistent)

; It is better to find the right fixed number for your mic in the long run

; Options: auto, 1-999

; Recommended: 175

audio_threshold = 175

; pause_threshold

; How long to wait (in seconds) before converting mic input to text

; If you feel like you are being cut off before you finish your response, increase this value

; If you feel like there is too much of a delay between you finishing your response and the text conversion, decrease this value

; Minimum: 0.5

pause_threshold = 0.5

; listen_timeout:

; How long to wait (in seconds) for the player to speak before retrying

; This needs to be set to ensure that Mantella can periodically check if the conversation has ended

; Recommended: 30

listen_timeout = 30

[LanguageModel]

; model:

; Options: gpt-4, gpt-3.5-turbo, gpt-4-32k, gpt-3.5-turbo-16k

; Recommended: gpt-3.5-turbo-16k

; If using openrouter.ai, here put the name of the model you want to use with openrouter in openrouter's provided format in its documentation. Example: meta-llama/llama-2-70b-chat

; Default: gpt-3.5-turbo

model = gpt-3.5-turbo

; max_response_sentences:

; The maximum number of sentences returned by the LLM. Lower this value to reduce waffling

max_response_sentences = 999

; alternative_openai_api_base:

; If you are using openai's services, leave this alone, otherwise you can change this variable to another base_api that uses openai's api

; For example, if you have a local llm framework or online framework that allows you to use a different url to access openai api functions, you can enter the base_api url here

; Your alternative api_base must support openai's python streaming protocol.

; Examples:

; http://127.0.0.1:5001/v1 for textgenwebui using the default openai extension

; http://localhost:8080/v1 using the default endpoint for Local.ai

; https://openrouter.ai/api/v1 for openrouter

; Ensure that you have the correct secret key set in GPT_SECRET_KEY.txt for the service you are using

; Note that for some services, like textgenwebui, you must enable the openai extension and have the model you want to use preloaded before running mantella

; Leave this value as none to use the normal openai chat gpt models.

alternative_openai_api_base = http://127.0.0.1:5001/v1

; custom_token_count

; If the model chosen is not recognised by Mantella, the token count for the given model will default to this number

; If this is not the correct token count for your chosen model, you can change it here

; Keep in mind that if this number is greater than the actual token count of the model, then Mantella will crash if a given conversation exceeds the model's token limit

; Default: 4096

custom_token_count = 4096

[Speech]

; tts_process_device:

; Whether to run xVASynth server (unless already running) on your CPU or a NVIDIA GPU (with CUDA installed)

; Options: cpu, gpu

tts_process_device = cpu

; pace:

; The default speed of talking. Also varies between voices.

; 0.5 = 2x faster; 2 = 2x slower

; Options: 0.1-2

; Recommended: 1.0

; Note that at the time of writing, this setting does not work with xVASynth v3.0.3 or less, but may work with future releases

pace = 1.0

; use_cleanup:

; Whether to try to reduce noise and the robot-sounding nature of xVASynth generated speech. Has only slight impact on processing speed for the CPU. Not meant to be used on voices that have post-effects attached to them (echoes, reverbs, etc.)

; Options: 0, 1

use_cleanup = 0

; use_sr:

; Whenever to improve the quality of your audio through Super-resolution of 22050Hz audio into 48000Hz audio. Keep the Hz setting within xVASynth to something higher like 48000 or 44100. Also to note, this is a fairly slow process on the CPU, but on some GPUs, it can be quick.

; Options: 0, 1

; Recommended: 0

use_sr = 0

[HUD]

; subtitles:

; Whether to display subtitles in the top left corner of the screen

; Options: 0, 1

subtitles = 1

[Cleanup]

; remove_mei_folders

; Clean up older instances of Mantella runtime folders from AppData/Local/Temp/_MEIxxxxxx

; These folders build up over time when Mantella.exe is run

; Enable this option to clean up these previous folders automatically when Mantella.exe is run

; Disable this option if running this cleanup inteferes with other Python exes

; For more details on what this is, see here: https://github.com/pyinstaller/pyinstaller/issues/2379

; Options: 0, 1

remove_mei_folders = 0

[Debugging]

; debugging:

; Whether debugging is enabled

; If this is set to 0, the values of all other variables in this section are ignored

; Options: 0, 1

debugging = 0

; play_audio_from_script:

; Whether to play the generated voicelines directly from the script / exe

; Set this value to 1 if testing Mantella while Skyrim is not running

; Options: 0, 1

play_audio_from_script = 1

; debugging_npc:

; Selects the NPC to test

; Set this value to None if you would instead prefer to select an NPC via the mod's spell

; Options: None, NPC name

debugging_npc = None

; use_mic:

; Whether the microphone is enabled

; When this value is set to 0, the sentence contained in default_player_response (see below) will be repeatedly sent to the LLM

; Options: 0, 1

use_mic = 0

; default_player_response:

; The default text sent to the LLM if the microphone is not enabled

default_player_response = Hey, I want to speak with you about it?

; exit_on_first_exchange:

; Whether to end the conversation after the first back and forth exchange

; Set this value to 1 if testing conversation saving on exit functionality

; Options: 0, 1

exit_on_first_exchange = 0

[Prompt]

; prompt:

; The starting prompt sent to the LLM when an NPC is selected

; If you would like to edit this, please ensure that the below dynamic variables are contained in curly brackets {}:

; name = the NPC's name

; bio = the NPC's background description

; trust = how well the NPC knows the player (eg "a stranger", "a friend")

; location = the current location

; time = the time of day as a number (eg 1, 22)

; time_group = the time of day in words (eg "in the morning", "at night")

; language = the selected language

; conversation_summary = reads the latest conversation summaries for the NPC stored in data/conversations/NPC_Name/NPC_Name_summary_X.txt

prompt = You are {name}, and you live in Skyrim. This is your background:\n\n{bio}\n\n

Sometimes in-game events will be passed before the player response between ** symbols. You cannot respond with ** symbols yourself, they only exist to give context. Here is an example:\n\n

*The player picked up a pair of gloves*\n Who do you think these belong to?\n\n

You are having a casual, inconsequential conversation with {trust} (the player) in {location}.

This conversation is a script that will be spoken aloud, so please keep your responses appropriately concise and avoid text-only formatting such as numbered lists.

The time is {time} {time_group}. If you directly refer to the time, please state it as, for example, '10 in the evening' rather than '22:00'.

You are not an AI language model because there are no AI language models in Skyrim. Do not break character / refer to the fact that you are in a video game.

The conversation takes place in {language}.\n\n

{conversation_summary}

Show

[Paths]

; Directories used by Mantella

; skyrim_folder:

; If you are using a Wabbajack modlist, Mod Organizer 2 may be storing your Skyrim folder in MO2\overwrite\Root

; If that is the case, try setting this path as your skyrim_folder if pointing to your actual Skyrim folder doesn't work

; If this path is incorrect, casting a spell on an NPC will will only end a conversation

; default = C:\Games\Steam\steamapps\common\Skyrim Special Edition

skyrim_folder = H:\SkyrimSE

; xvasynth_folder:

; The folder you have xVASynth downloaded to (the folder that contains xVASynth.exe)

; default = C:\Games\Steam\steamapps\common\xVASynth

xvasynth_folder = H:\xVASynth_3.0

; mod_folder:

; This is the path to the Mantella spell

; If you are using Mod Organizer 2, this path can be found by right-clicking the Mantella mod in your mod list

; and selecting "Open in Explorer"

; If you are using Vortex, this path needs to be set to your Skyrim\Data folder

; eg C:\Games\Steam\steamapps\common\Skyrim Special Edition\Data

; If this path is incorrect, NPCs will say the same voiceline on repeat

; default = C:\Modding\MO2\mods\Mantella

mod_folder = H:\MO2\mods\Mantella_Spell-98631-0-7-1693656376

[Language]

; language:

; The language used by ChatGPT, xVASynth, and Whisper

; Options: en, ar, da, de, el, es, fi, fr, hu, it, ko, nl, pl, pt, ro, ru, sv, sw, uk, ha, tr, vi, yo

language = en

; end_conversation_keyword:

; The keyword Mantella will listen out for to end the conversation (you can also end conversations by re-casting the Mantella spell)

end_conversation_keyword = Goodbye

; goodbye_npc_response

; The response the NPC gives at the end of the conversation

goodbye_npc_response = Safe travels

; collecting_thoughts_npc_response

; The response the NPC gives when they need to summarise the conversation because the maximum token count has been reached

collecting_thoughts_npc_response = I need to gather my thoughts for a moment

[Microphone]

; microphone_enabled

; Whether to use microphone input (1) or text input (0)

; Options: 0, 1

microphone_enabled = 0

; model_size:

; The size of the Whisper model used. Some languages require larger models. The base.en model works well enough for English

; See here for a comparison of languages and their Whisper performance:

; https://github.com/openai/whisper#available-models-and-languages

; Options: tiny, tiny.en, base, base.en, small, small.en, medium, medium.en, large-v1, or large-v2

model_size = base

; process_device:

; Whether to run Whisper on your CPU or NVIDIA GPU (with CUDA installed)

; Options: cpu, cuda

process_device = cpu

; audio_threshold:

; Controls how much background noise is filtered out

; If the mic is not picking up speech, try lowering this value

; If the mic is picking up too much background noise, try increasing this value

; Set this value to auto to let the script decide (only recommended if you are trying to fix mic issues, otherwise this option can be inconsistent)

; It is better to find the right fixed number for your mic in the long run

; Options: auto, 1-999

; Recommended: 175

audio_threshold = 175

; pause_threshold

; How long to wait (in seconds) before converting mic input to text

; If you feel like you are being cut off before you finish your response, increase this value

; If you feel like there is too much of a delay between you finishing your response and the text conversion, decrease this value

; Minimum: 0.5

pause_threshold = 0.5

; listen_timeout:

; How long to wait (in seconds) for the player to speak before retrying

; This needs to be set to ensure that Mantella can periodically check if the conversation has ended

; Recommended: 30

listen_timeout = 30

[LanguageModel]

; model:

; Options: gpt-4, gpt-3.5-turbo, gpt-4-32k, gpt-3.5-turbo-16k

; Recommended: gpt-3.5-turbo-16k

; If using openrouter.ai, here put the name of the model you want to use with openrouter in openrouter's provided format in its documentation. Example: meta-llama/llama-2-70b-chat

; Default: gpt-3.5-turbo

model = gpt-3.5-turbo

; max_response_sentences:

; The maximum number of sentences returned by the LLM. Lower this value to reduce waffling

max_response_sentences = 999

; alternative_openai_api_base:

; If you are using openai's services, leave this alone, otherwise you can change this variable to another base_api that uses openai's api

; For example, if you have a local llm framework or online framework that allows you to use a different url to access openai api functions, you can enter the base_api url here

; Your alternative api_base must support openai's python streaming protocol.

; Examples:

; http://127.0.0.1:5001/v1 for textgenwebui using the default openai extension

; http://localhost:8080/v1 using the default endpoint for Local.ai

; https://openrouter.ai/api/v1 for openrouter

; Ensure that you have the correct secret key set in GPT_SECRET_KEY.txt for the service you are using

; Note that for some services, like textgenwebui, you must enable the openai extension and have the model you want to use preloaded before running mantella

; Leave this value as none to use the normal openai chat gpt models.

alternative_openai_api_base = http://127.0.0.1:5001/v1

; custom_token_count

; If the model chosen is not recognised by Mantella, the token count for the given model will default to this number

; If this is not the correct token count for your chosen model, you can change it here

; Keep in mind that if this number is greater than the actual token count of the model, then Mantella will crash if a given conversation exceeds the model's token limit

; Default: 4096

custom_token_count = 4096

[Speech]

; tts_process_device:

; Whether to run xVASynth server (unless already running) on your CPU or a NVIDIA GPU (with CUDA installed)

; Options: cpu, gpu

tts_process_device = cpu

; pace:

; The default speed of talking. Also varies between voices.

; 0.5 = 2x faster; 2 = 2x slower

; Options: 0.1-2

; Recommended: 1.0

; Note that at the time of writing, this setting does not work with xVASynth v3.0.3 or less, but may work with future releases

pace = 1.0

; use_cleanup:

; Whether to try to reduce noise and the robot-sounding nature of xVASynth generated speech. Has only slight impact on processing speed for the CPU. Not meant to be used on voices that have post-effects attached to them (echoes, reverbs, etc.)

; Options: 0, 1

use_cleanup = 0

; use_sr:

; Whenever to improve the quality of your audio through Super-resolution of 22050Hz audio into 48000Hz audio. Keep the Hz setting within xVASynth to something higher like 48000 or 44100. Also to note, this is a fairly slow process on the CPU, but on some GPUs, it can be quick.

; Options: 0, 1

; Recommended: 0

use_sr = 0

[HUD]

; subtitles:

; Whether to display subtitles in the top left corner of the screen

; Options: 0, 1

subtitles = 1

[Cleanup]

; remove_mei_folders

; Clean up older instances of Mantella runtime folders from AppData/Local/Temp/_MEIxxxxxx

; These folders build up over time when Mantella.exe is run

; Enable this option to clean up these previous folders automatically when Mantella.exe is run

; Disable this option if running this cleanup inteferes with other Python exes

; For more details on what this is, see here: https://github.com/pyinstaller/pyinstaller/issues/2379

; Options: 0, 1

remove_mei_folders = 0

[Debugging]

; debugging:

; Whether debugging is enabled

; If this is set to 0, the values of all other variables in this section are ignored

; Options: 0, 1

debugging = 0

; play_audio_from_script:

; Whether to play the generated voicelines directly from the script / exe

; Set this value to 1 if testing Mantella while Skyrim is not running

; Options: 0, 1

play_audio_from_script = 1

; debugging_npc:

; Selects the NPC to test

; Set this value to None if you would instead prefer to select an NPC via the mod's spell

; Options: None, NPC name

debugging_npc = None

; use_mic:

; Whether the microphone is enabled

; When this value is set to 0, the sentence contained in default_player_response (see below) will be repeatedly sent to the LLM

; Options: 0, 1

use_mic = 0

; default_player_response:

; The default text sent to the LLM if the microphone is not enabled

default_player_response = Hey, I want to speak with you about it?

; exit_on_first_exchange:

; Whether to end the conversation after the first back and forth exchange

; Set this value to 1 if testing conversation saving on exit functionality

; Options: 0, 1

exit_on_first_exchange = 0

[Prompt]

; prompt:

; The starting prompt sent to the LLM when an NPC is selected

; If you would like to edit this, please ensure that the below dynamic variables are contained in curly brackets {}:

; name = the NPC's name

; bio = the NPC's background description

; trust = how well the NPC knows the player (eg "a stranger", "a friend")

; location = the current location

; time = the time of day as a number (eg 1, 22)

; time_group = the time of day in words (eg "in the morning", "at night")

; language = the selected language

; conversation_summary = reads the latest conversation summaries for the NPC stored in data/conversations/NPC_Name/NPC_Name_summary_X.txt

prompt = You are {name}, and you live in Skyrim. This is your background:\n\n{bio}\n\n

Sometimes in-game events will be passed before the player response between ** symbols. You cannot respond with ** symbols yourself, they only exist to give context. Here is an example:\n\n

*The player picked up a pair of gloves*\n Who do you think these belong to?\n\n

You are having a casual, inconsequential conversation with {trust} (the player) in {location}.

This conversation is a script that will be spoken aloud, so please keep your responses appropriately concise and avoid text-only formatting such as numbered lists.

The time is {time} {time_group}. If you directly refer to the time, please state it as, for example, '10 in the evening' rather than '22:00'.

You are not an AI language model because there are no AI language models in Skyrim. Do not break character / refer to the fact that you are in a video game.

The conversation takes place in {language}.\n\n

{conversation_summary}